How the EU AI Act Impacts AI Hiring

How the EU AI Act Impacts AI Hiring in Europe (2026 Guide)

The EU AI Act has moved AI hiring from a pure scale and speed problem into a governance and risk problem. In 2026, organisations building, buying, or deploying AI in Europe are increasingly hiring with one question in mind: can we evidence compliance, not just capability? That shift is already changing job design, approval chains, technical workflows, and the profile of AI leadership.

For high-growth teams, the eu ai act impact on hiring is most visible in two areas:

- AI is treated as a regulated capability, especially where it touches people, safety, or critical infrastructure.

- Hiring plans expand beyond ML engineering into AI governance, risk classification, and audit readiness.

If you are building an AI team across borders, a specialist partner that understands regulated hiring can reduce both execution risk and time-to-hire. Optima supports regulated, business-critical AI hiring through its AI Recruitment Agency in Europe capability.

What Is the EU AI Act?

The EU AI Act (part of the EU’s broader Artificial Intelligence Regulation agenda) is the first major, cross-sector legal framework that regulates AI based on risk classification. It applies to providers, deployers, importers, distributors, and product manufacturers, and it can apply extraterritorially when AI systems are placed on the EU market or used in the EU.

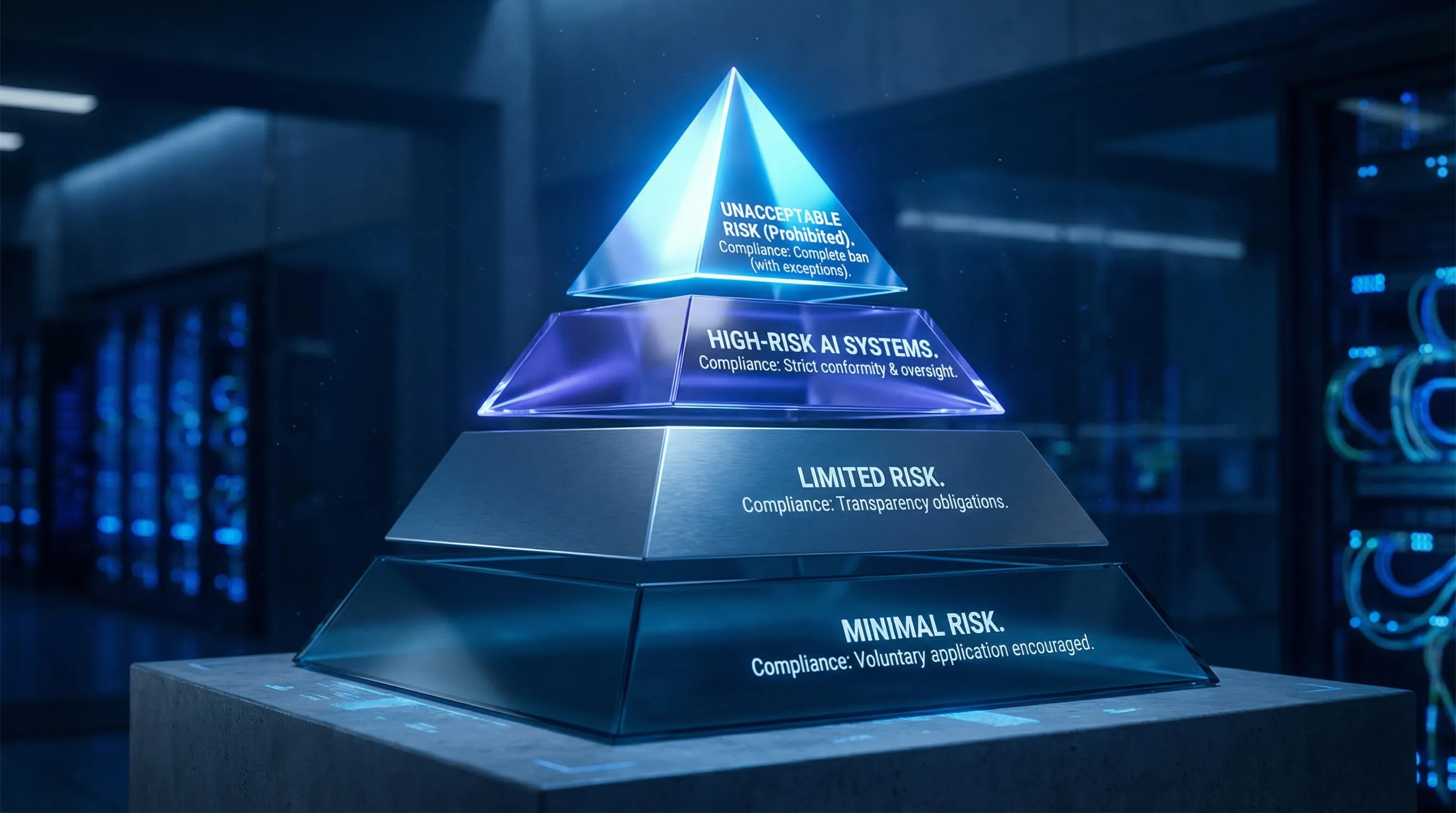

The risk-based classification system

At a high level, the Act groups AI use cases into tiers, with stricter obligations as risk increases:

- Unacceptable risk: certain AI practices are prohibited.

- High-risk AI systems: permitted, but subject to extensive compliance duties.

- Limited-risk AI: transparency obligations in specific situations.

- Minimal-risk AI: largely unregulated under the Act.

High-risk vs limited-risk AI in employment contexts

For AI hiring leaders, the key point is that employment-related AI systems can fall into high-risk AI systems categories. Tools used for recruitment and selection (for example, CV screening, ranking, or candidate evaluation) are frequently discussed as high-risk because they can materially affect an individual’s career and livelihood.

That changes how organisations must approach:

- Data protection and data governance (including quality, representativeness, and traceability).

- Model transparency and documentation.

- Human oversight and accountability.

- Ongoing monitoring, incident handling, and evidence retention.

For official background and updates, the European Commission’s AI regulatory framework page is a useful starting point.

Timeline for enforcement (what matters in 2026)

The AI Act is being implemented in phases rather than as a single “go-live” date. In 2026, many organisations are actively preparing for obligations that apply to high-risk systems, governance structures, documentation, and vendor due diligence.

The practical takeaway for hiring is straightforward: the closer your AI is to regulated, high-impact use cases, the more your hiring plan must include compliance capacity and audit capability, not only product and engineering delivery.

Why the EU AI Act Changes AI Hiring Strategy

The EU AI Act does not just create new paperwork. It changes operating models. That is why the eu ai act recruitment implications are showing up in workforce planning and org design.

Compliance obligations create permanent “new work”

For high-risk AI systems, organisations may need to implement and maintain:

- Risk management processes (including documented AI risk assessment and mitigation).

- Data governance controls and lineage (what data, why it is used, where it came from, and how it is handled).

- Technical documentation and record-keeping suitable for internal review and external scrutiny.

- Human oversight mechanisms that are real in practice (clear escalation, intervention rights, and decision logging).

- Accuracy, robustness, and cybersecurity measures that can be evidenced.

This drives ai compliance hiring europe because teams need people who can build repeatable compliance operations.

Documentation becomes a first-class engineering deliverable

In many organisations, documentation has historically been “best effort”. Under an AI regulatory lens, documentation becomes part of the product. This shifts hiring toward candidates who can:

- Write rigorous technical artefacts (model cards, datasheets, evaluation reports).

- Implement logging and traceability.

- Collaborate with legal, security, and privacy teams without slowing delivery to a standstill.

Governance structures and oversight roles expand

The Act accelerates investment in AI governance, including internal policy, controls, auditability, and vendor management. This is a major driver of ai governance recruitment europe, especially for companies scaling across multiple EU markets where local employment practices, works councils, and data handling expectations can vary.

Structured summary: The EU AI Act changes AI hiring strategy because it introduces a risk-based compliance operating model. That model requires documented controls (risk classification, AI risk assessment, transparency, data protection), formal oversight (governance, audits), and cross-functional execution (legal, security, HR, engineering). As a result, organisations hire more governance and assurance talent and redesign technical roles to deliver auditable systems.

New Roles Emerging Due to the EU AI Act

Many teams assumed regulation would only create work for Legal. In practice, it creates work at the intersection of product, engineering, security, and HR. Below are roles increasingly appearing in 2026 hiring plans as part of ai regulation hiring europe.

AI Compliance Officer

An AI Compliance Officer typically owns the compliance programme for AI systems, particularly those classified as high-risk. Responsibilities often include interpreting internal obligations into operational controls, coordinating evidence collection, managing compliance calendars, and partnering with Legal, Privacy, and Security.

In hiring, look for candidates who can translate regulation into delivery routines, not only policy.

AI Governance Lead

An AI Governance Lead sets the organisation’s AI control framework: policies, review boards, decision rights, and sign-off pathways. They often define “how we build and deploy AI here” in a way that scales across teams and geographies.

This role is becoming central to eu artificial intelligence act talent impact because governance is now a competitive capability.

AI Risk Analyst

An AI Risk Analyst focuses on risk classification and ongoing AI risk assessment. They help teams identify whether a system is high-risk, what the control requirements are, and what “good enough evidence” looks like for internal and external stakeholders.

This role often overlaps with enterprise risk functions, but it requires AI literacy.

Model Validation Specialist

A Model Validation Specialist is responsible for independent or semi-independent verification of models, including performance, robustness, drift monitoring, bias testing, and documentation quality.

In regulated environments, this role mirrors model risk management disciplines that are common in financial services, but it is expanding into health, industrial AI, and HR tech.

AI Ethics Specialist

An AI Ethics Specialist operationalises AI ethics into practical standards, review processes, and training. They support fairness, explainability, and responsible design, and they often work closely with product teams to ensure ethical principles are measurable rather than aspirational.

Where AI touches hiring and employment, this role is frequently paired with DEI, People Ops, and legal partners.

Regulatory AI Advisor

A Regulatory AI Advisor supports interpretation, horizon scanning, and stakeholder alignment. They may sit in Legal, Compliance, or a specialised AI governance group, and they help leadership understand what needs to change in products, procurement, and processes.

For cross-border employers, this role often becomes a connector between EU requirements and non-EU operating realities.

Impact on Technical AI Roles

The EU AI Act does not reduce demand for ML engineers. It changes what “good” looks like.

Explainability and transparency become selection criteria

Engineering teams increasingly need candidates who can implement and defend:

- Explainability approaches suited to the use case (not one-size-fits-all).

- Clear evaluation narratives (what was tested, what failed, what was improved).

- Practical transparency artefacts that support internal reviews and, where necessary, external scrutiny.

This is where model transparency stops being a research topic and becomes an engineering responsibility.

Documentation-heavy workflows reshape MLOps

Modern MLOps is already about repeatability. Under regulation, it also becomes about auditability. That increases demand for engineers who can build:

- Traceable pipelines (data lineage, feature provenance, reproducible training runs).

- Strong monitoring (drift, data quality, incident triggers).

- Evidence packaging for governance reviews and AI audit requests.

If your team is scaling ML platforms, you may find this perspective useful alongside regulation: MLOps Hiring Trends in Europe.

Security integration becomes non-negotiable

High-risk systems raise expectations for robustness and cybersecurity. That pushes technical hiring toward profiles that understand:

- Threat modelling for ML systems.

- Secure deployment patterns.

- Model and data access controls aligned with data protection requirements.

In practice, AI teams are hiring closer to security engineering and platform engineering than in earlier waves.

Legal and engineering collaboration becomes a core skill

One of the most underestimated shifts is cultural: regulated AI delivery requires engineers who can work with legal, compliance, and privacy teams without creating friction. Hiring processes increasingly test:

- Written communication.

- Comfort with review gates.

- Ability to design systems that satisfy risk controls while meeting product timelines.

Executive and Board-Level Implications

The AI Act is also shaping leadership hiring because it forces clearer accountability for AI risk governance.

Board accountability and governance expectations

Boards and executive committees are increasingly asking for:

- A clear inventory of AI systems and their risk classification.

- Reporting on AI risk assessment outcomes, incidents, and mitigations.

- Evidence that high-risk AI controls exist in operations, not only on paper.

This elevates AI governance from a technical concern to an enterprise risk item.

Rising demand for Chief AI Officer and AI leadership roles

In 2026, more organisations are defining senior AI leadership roles that combine delivery and governance. Depending on maturity, titles vary (Chief AI Officer, VP AI Platform, Head of Responsible AI), but the underlying requirement is similar: leaders must scale AI while building compliant systems.

This is particularly acute in sectors where AI intersects with safety, health, or employment decisions.

Why this is becoming an executive search problem

Hiring AI leaders now requires validating two dimensions:

- Can the leader deliver business outcomes with AI?

- Can the leader build compliant operating models, including governance, audit readiness, and cross-functional alignment?

That is why many firms engage specialist partners for leadership mandates. If you are assessing leadership options, see Optima’s focus on Executive Search for AI & Deep Tech Leaders.

How Companies Can Prepare Their AI Hiring Strategy

A strong 2026 hiring plan treats compliance as capacity, not as a last-minute review.

Role mapping based on risk classification

Start with your AI inventory and map roles to the systems most likely to be high-risk. If your organisation uses AI for recruitment, selection, performance management, or similar decisions, assume higher scrutiny and build roles that can support oversight.

Common outputs include a governance org design, a control map, and a prioritised hiring plan tied to the AI roadmap.

Compliance-first hiring (without freezing delivery)

The goal is not to “lawyer the roadmap”. The goal is to ensure teams can evidence good practice. That usually means hiring for:

- AI governance and compliance programme ownership.

- Model validation and monitoring.

- Data protection aligned engineering practices.

- AI audit readiness and documentation discipline.

Cross-functional hiring and clearer interfaces

Most AI failures under regulation are interface failures: unclear ownership between product, engineering, HR, privacy, and security. Consider hiring or appointing leads who can own those seams.

Cross-border talent sourcing

Regulated AI capability is scarce. Many organisations therefore use cross-border recruitment to find specialised talent in governance, validation, and MLOps. Cross-border hiring adds complexity (employment models, data access, localisation, stakeholder coverage), but it can be decisive where local markets are tight.

The shortage dynamic is not theoretical. It is visible across technical and governance profiles, especially in fast-growing hubs. For context, read: AI Talent Shortage in Europe.

Working with AI-specialised recruitment partners

An executive hiring agency can add the most value when it can validate not only credentials, but also regulated delivery experience. In practice, that means assessing candidates on:

- Experience with risk-based frameworks and audits (internal or external).

- Ability to operate across Legal, Security, and Product.

- Evidence quality: documentation habits, decision logging, incident handling.

- Leadership judgement under constraints.

Optima Search Europe specialises in business-critical hiring across Europe and globally, including AI leadership roles and cross-border recruitment where governance and compliance expectations are rising.

Frequently Asked Questions

What is the EU AI Act? The EU AI Act is the European Union’s risk-based regulation for artificial intelligence. It sets different obligations depending on how an AI system is used and how much harm it could cause. It includes rules for prohibited practices, strict requirements for high-risk AI systems, and transparency obligations for certain limited-risk uses. For employers and AI teams, the most relevant concept is risk classification, especially where AI influences employment decisions or access to opportunities.

When does the EU AI Act take effect? The EU AI Act is being implemented in phases. It entered into force in 2024, but many obligations apply later depending on the topic and the system type. In 2026, organisations are typically in active implementation for governance structures, risk classification work, documentation standards, and vendor due diligence, while preparing for broader enforcement of high-risk AI requirements. The practical point for hiring is that compliance capability is needed ahead of enforcement deadlines, not after.

Does the EU AI Act affect hiring? Yes. The EU AI Act affects hiring in two ways. First, AI tools used in recruitment and HR decision-making can fall into high-risk categories, which increases compliance, documentation, and oversight requirements for HR and TA teams. Second, the regulation changes the talent market by increasing demand for AI governance, model validation, and risk roles. Together, these effects drive more compliance-driven hiring and stronger cross-functional collaboration between HR, Legal, Privacy, and Engineering.

What roles are most impacted by AI regulation? The most impacted roles are those tied to governance and assurance, plus technical roles that must deliver auditability. Examples include AI Compliance Officer, AI Governance Lead, AI Risk Analyst, Model Validation Specialist, and Regulatory AI Advisor. On the engineering side, ML platform and MLOps roles are also affected because they need to implement traceability, monitoring, and documentation. Senior leadership roles are impacted as well because boards increasingly expect clear AI risk governance and accountable ownership.

Do companies need AI compliance officers? Many do, particularly if they develop, deploy, or procure AI systems that may be classified as high-risk. An AI Compliance Officer (or equivalent) helps translate regulatory obligations into operating routines: control design, evidence collection, review calendars, and stakeholder coordination. Not every organisation needs a standalone role immediately, but most need clear ownership of AI compliance work. As AI portfolios grow, this responsibility often becomes too large to remain a part-time task.

How can companies adapt their AI hiring strategy? Start with an inventory of AI systems and apply risk classification to identify where high-risk obligations are likely. Then map roles to the new work created by compliance: AI governance, AI risk assessment, model validation, documentation and transparency, and AI audit readiness. Build cross-functional interfaces so Legal, Privacy, Security, HR, and Engineering have clear decision rights. Finally, plan for cross-border recruitment if local supply is limited, and partner with recruiters who can validate regulated AI delivery experience.

Conclusion

In 2026, the EU AI Act is accelerating a clear shift: AI hiring in Europe is becoming compliance-driven and risk-led. Organisations are adding governance layers, building audit-ready engineering practices, and recruiting new assurance roles to manage high-risk AI systems.

The companies that adapt fastest are treating AI governance as a capability that must be staffed, measured, and led. That means hiring beyond core ML delivery into AI compliance, validation, and executive oversight.

If you are hiring AI leadership or building regulated AI teams across Europe, Optima Search Europe can support with specialist, cross-border search aligned to the realities of AI regulation and modern AI governance.